After Almaty, one thing is clear: AI in education is no longer a tool question. It is an implementation question.

Education does not need another wave of AI enthusiasm.

It needs decisions.

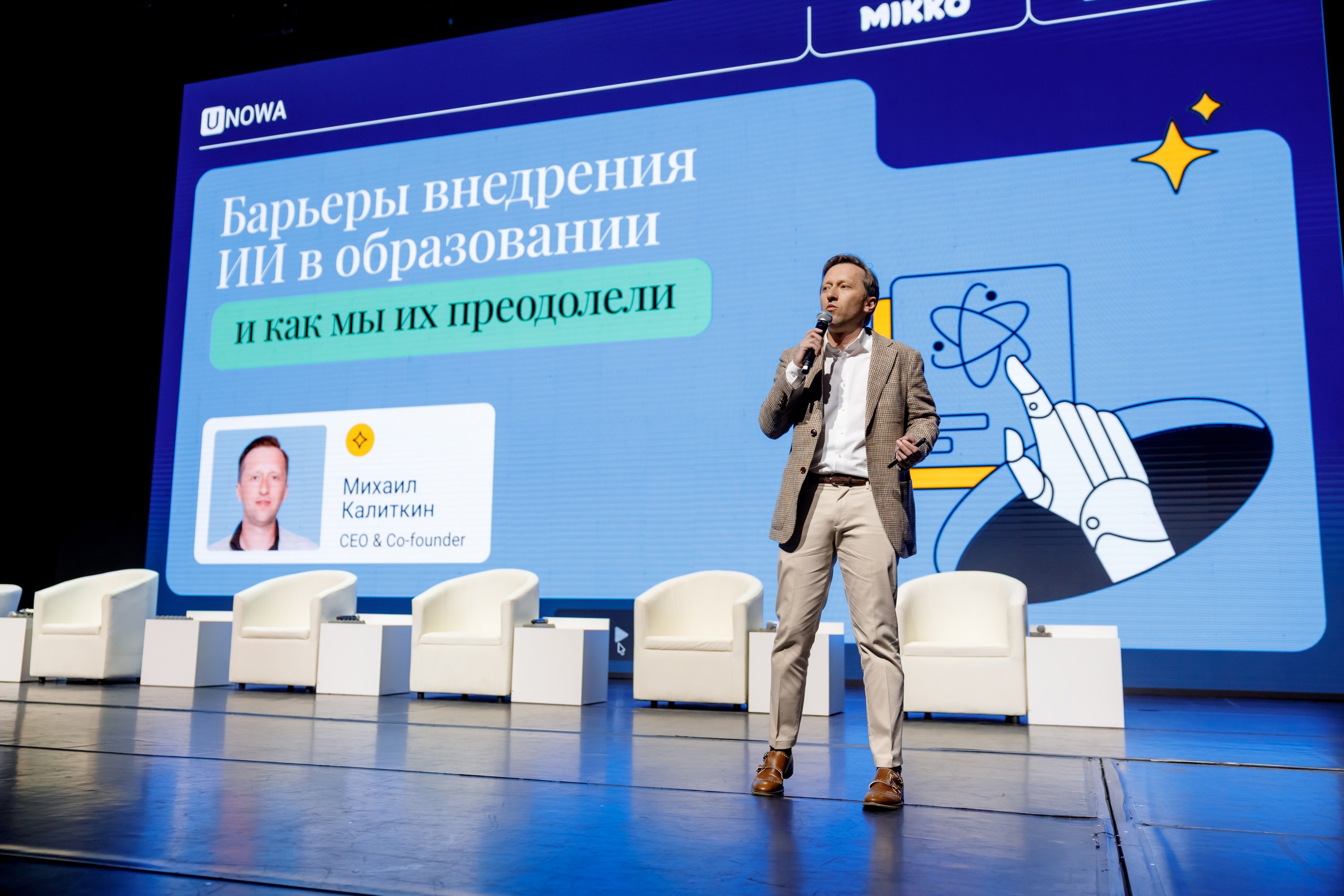

That is what made the AI for Education International Summit in Almaty important. The event, held on 22–23 April 2026 at the Palace of the Republic, was framed not as a showcase of isolated tools, but as a working conversation about how AI is being brought into real education systems — with all the complexity that comes with that. The official summit agenda focused on practical application, safe and ethical adoption, cybersecurity, digital inclusion, and international cooperation. Public posts from speakers and participants after the summit show the same pattern: the conversation was not about whether AI belongs in education, but about how to use it responsibly, at scale, and in ways that actually improve teaching and learning.

That shift matters.

Because for ministries, universities, school leaders, and education partners, the real challenge is no longer selecting an AI product. The harder question is whether the system around that product is ready.

1. The summit confirmed a major shift: AI is now a system design issue

The most important signal from Almaty was structural.

The summit programme did not treat AI as a standalone innovation topic. It linked AI to strategy, teaching practice, inclusion, ethics, university transformation, and risk management. Sessions ranged from “Secure AI within Higher Education with Chrome and Gemini” and “Creating Visually Powerful Learning with AI” to talks on adaptive teaching, inclusive education, cybersecurity, and AI ethics. That is a very different agenda from the earlier stage of the market, where events were often dominated by product demos and abstract promises.

In other words, the question is changing.

Not: Which AI tool should we adopt?

But: What kind of learning system are we building around it?

That distinction is critical. A strong tool placed inside a weak system will still fail. If schools do not have a clear implementation pathway, if teachers are expected to absorb new workflows without support, if data governance is unclear, and if inclusion remains an afterthought, AI does not modernize education. It simply adds another layer of pressure.

This is why the most relevant takeaway from Almaty is not technological. It is operational. Education systems now need implementation logic, not just innovation language. That is also the direction reflected in UNOWA’s own public framing of the summit: the real question is how ideas work inside real education systems.

2. Inclusion was not a side discussion. It was a test of seriousness.

Another important signal from the summit: inclusion was not treated as a separate social track.

It was built into the core programme.

The official agenda included sessions such as “Scaling Inclusive Education with AI: Turning Global Innovation into Impact” and “AI for Inclusive Education: Ensuring Equity and Access for Every Learner.” A post-summit recap from Semey Medical University also emphasized that special attention was given to inclusive education, including support for children with special educational needs, personalized learning, emotional intelligence, and students’ psychological well-being.

That matters because inclusion is where many AI claims are truly tested.

It is easy to say a tool is available to everyone. It is much harder to prove that it supports learners with different needs, different contexts, and different levels of readiness. One participant’s public reflection after the summit captured this clearly: most student challenges are invisible, access alone is not enough, and AI only helps when it is used thoughtfully. She also pointed to a reality many education systems know well but do not always state directly: teachers do not lack care — they lack time.

This is one of the strongest strategic lessons from Almaty.

Inclusive AI is not about adding a few accessibility features to a platform. It is about designing learning environments where support is normalised, where teachers can act on information, and where students are not left alone with “smart” tools that still depend on human guidance.

For ministries and school systems, this changes the evaluation criteria. The right question is no longer whether a platform uses AI. The right question is whether it strengthens inclusive practice in classrooms, reduces friction for educators, and supports continuity rather than fragmentation.

3. Teacher workload emerged as a central implementation issue

When people talk about AI in education, they often speak first about student outcomes.

Almaty pointed to something equally important: teacher capacity.

Several signals across the summit point in the same direction. Official descriptions highlighted practical implementation and real-world application. ALT University’s recap emphasized that the summit focused on how AI can reduce teachers’ administrative burden and make learning more personalized. Canva’s Tommy Wilson described a live demo for around 100 educators at KIMEP University, centred on lesson planning, content creation, and the delivery of higher-quality materials. Learnetic’s post after the summit also stressed that AI brings the most value when it supports the full teaching cycle rather than operating as disconnected tools.

That is a serious point.

Education systems do not need more digital complexity. They need useful simplification.

If AI saves time only in one moment of the workflow but creates confusion in five others, it is not solving the real problem. If it generates content but does not help teachers interpret progress, adapt instruction, or manage classroom reality, it remains superficial.

The products and models that will matter most over the next phase are not the loudest ones. They are the ones that reduce workload, improve continuity, and fit into the real rhythm of schools and universities.

4. Ethics and cybersecurity are moving from “important topics” to non-negotiable requirements

One of the clearest signs of maturity at the summit was the prominence of ethics and cybersecurity.

The official summit description explicitly positioned the event around safe and ethical digital education. The programme included “Human Factor in Cybersecurity: How Social Engineering Breaks Systems,” “Cybersecurity in AI-Powered Education: Risks, Protection, and Strategy,” and a broader direction titled “Trust, Responsibility, and AI Ethics: Building a Safe Digital World.” ALT University’s recap reinforced the same focus, naming ethical standards, personal data protection, and digital sovereignty among the key issues discussed.

This should change how education leaders think about procurement and partnerships.

Ethics can no longer sit in the final slide of a presentation. Cybersecurity cannot be treated as a technical issue for later. In education, these are design questions from the start. What data is collected? Who interprets it? How are risks communicated? What remains under human control? What happens to trust if a system produces speed but not accountability?

As AI becomes embedded in assessment, administration, and classroom support, governance becomes part of educational quality.

5. Central Asia is not watching from the sidelines

The summit also sent a regional signal that should not be ignored.

The UK government’s event listing described the Almaty summit as a landmark event that would inform AI strategy and teaching and learning across Central Asia. It also noted support from Kazakhstan’s Minister of Science and Higher Education and the invitation extended to universities across the country. ALT University reported participation from experts in education, technology, and public administration from 20 countries. Public posts from speakers added an important market-level detail: Canva’s team described Kazakhstan as “one to watch,” pointing to already strong adoption and serious interest from universities and educators, while another speaker highlighted direct engagement with the Deputy Minister of Education of the Kyrgyz Republic during the event.

This is not peripheral momentum.

It is a regional education market moving from curiosity to adoption.

And that matters for any organisation working with ministries, school systems, universities, or local delivery partners. The next phase of growth in education AI will not come from broad claims. It will come from regional ecosystems that are ready to connect policy, pedagogy, infrastructure, and implementation.

What education leaders should take from Almaty

The summit did not produce one single headline.

It produced a more important conclusion.

AI in education is entering a stricter phase.

A phase where systems will be judged not by how innovative they sound, but by how well they work inside classrooms, institutions, and national strategies.

A phase where inclusion is not a side narrative, but a design standard.

A phase where teacher workload is not a secondary concern, but a central success factor.

A phase where cybersecurity, ethics, and governance are not constraints on progress, but conditions for trustworthy progress.

That is why Almaty matters.

Not because it proved that AI is coming to education.

That question is already settled.

It matters because it showed what the real work now looks like: building education systems where AI is practical, inclusive, safe, and usable by the people who carry the system every day.

Check out other articles

Explore the latest perspectives from our digital research team

UNOWA Shortlisted at the ETIH Innovation Awards 2026

UNOWA has been shortlisted at the ETIH Innovation Awards 2026 across three key categories: inclusion, STEM, and global impact. Recognized alongside global leaders such as Google Research, Samsung, and edX, this milestone reflects more than industry visibility — it highlights a shift toward scalable, system-level education solutions with measurable outcomes. In this article, we explore what each nomination represents, how MIKKO and ULabs address critical challenges in modern education, and why this recognition signals a broader transformation from tools to systems.

From explaining science to testing reality: why STEM is quietly being redesigned around data

For years, STEM education has claimed to teach scientific thinking. In practice, it has often taught something else: how to reproduce known answers. That is the real limitation of traditional STEM models today. Not the lack of equipment. The lack of authentic inquiry. Modern science is not built on explanation alone. It is built on measurement, testing, iteration, and interpretation. And if education wants to prepare students for the modern world, classrooms need to reflect that shift.

HOPE AI Summit 2026: Why the Future of AI in Education Depends on Implementation

On 22–23 April in Almaty, Kazakhstan, the HOPE AI Summit 2026 will bring together policymakers, educators, EdTech leaders, and innovators to discuss one of the most urgent questions in global education: How do we move AI from promising tools to real educational impact? That is the real value of this event.